Tag: Optimization

-

Unraveling the Intricacies: The Hidden Gems of Branch Predictors in Modern CPUs

The world of CPU design and optimization is a delicate ballet between hardware engineers and software developers. One of the stars of this performance is the branch predictor, a component of the CPU that anticipates the control flow changes in programs to keep the instruction pipeline populated. However, like any sophisticated machinery, branch predictors can…

-

Is SQL Becoming a Niche Skill or a Hidden Treasure?

In its 50th year, SQL (Structured Query Language) continues to be a fundamental component of data management and backend development. Despite its importance, there’s an ongoing debate about whether SQL is becoming a niche skill. With the rise of NoSQL databases and the growing complexity of tech stacks, many argue that specialized SQL knowledge is…

-

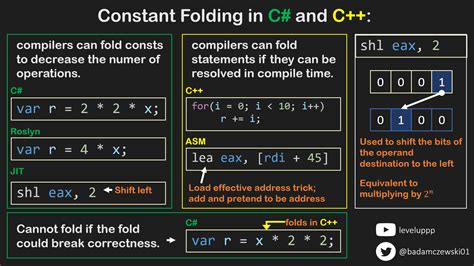

Unraveling the Intricacies of Custom Constant Folding in C/C++

Constant folding is a term that might sound highly technical and arcane to many, yet it’s an integral part of optimizing compilers for any high-performance code, particularly in C and C++. The concept is simple: a compiler evaluates constant expressions at compile time rather than runtime, thereby producing more efficient code. But what happens when…

-

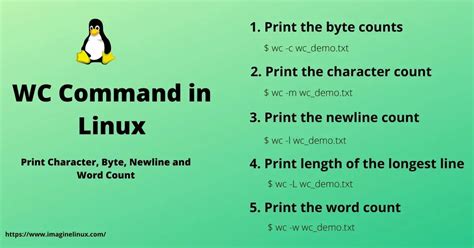

Turbocharging ‘wc’: The New Frontier in Unix Word Count Optimization

When it comes to text processing on Unix systems, few utilities are as venerable as ‘wc’ (word count). This seemingly simple program is a quintessential tool used to count lines, words, and characters in files. However, recent developments have revealed new avenues for optimizing ‘wc’, thanks to advanced techniques such as state machines and SIMD…

-

Boosting Performance in Unix ‘wc’ Command: Is a State Machine the Answer?

In the world of Unix utilities, few tools are as iconic as `wc`, the word count program. Enthusiasts and professionals alike rely on it for its simplicity—counting lines, words, and characters in text files. But the unassuming `wc` command is also a hotbed of innovation, spawning countless debates on optimization techniques, from state machines to…

-

Exploring the Fascinating World of Hashing through SHAllenge

The SHAllenge initiative has emerged as an intriguing competition that has enticed coding enthusiasts and cryptographic aficionados alike. The premise is deceptively simple: compete to generate the lowest possible SHA256 hash. Yet, beneath this straightforward challenge lies a world brimming with technical intricacies, coding prowess, and strategic decisions that can spell the difference between fleeting…

-

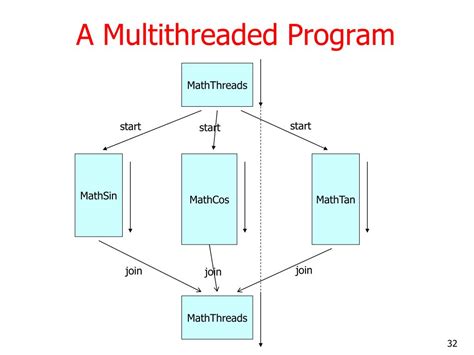

The Perils of Multithreading in Modern Programming

In the world of software development, many programmers regard multithreading as a magic bullet for performance issues. However, this assumption is often misguided as highlighted by multiple developers’ experiences. As some seasoned engineers have noted, it’s almost a rite of passage to think that ‘more threads = faster execution,’ only to discover that their application…

-

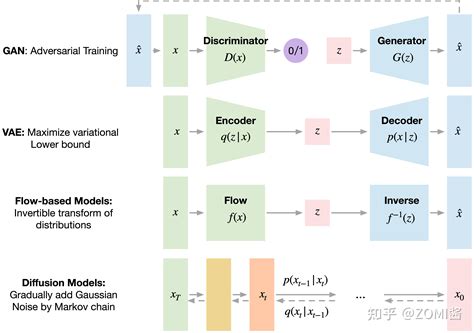

Exploring the Fascinating Intersection of Diffusion Models and Syntax Trees in Program Synthesis

The intersection of diffusion models and syntax trees is opening new frontiers in the domain of artificial intelligence and program synthesis. Researchers’ innovative use of these techniques—typically applied in different contexts like graphics and optimization algorithms—suggests a rich vein of untapped potential. By leveraging syntax trees, which help structure and understand programming languages, and integrating…

-

The Great Paradox: Why Modern Software Feels Slower Despite Faster Computers

In the ever-evolving world of technology, advancements in hardware have skyrocketed, giving us computers that are exponentially more powerful and capable than those of the past. Yet, a common sentiment among users and developers alike is that software seems slower than ever. It defies logic—how can applications feel sluggish when the hardware is so much…

-

Evolving Efficiency: The Future of Quantized Language Models in a Sustainable Tech Ecosystem

The advent of larger and more intricate language models (LLMs) has brought unprecedented advancements in natural language understanding and generation. However, this rapid progress is also accompanied by significant concerns regarding the computational and energy costs associated with training these models. The push towards making these models more energy-efficient and cost-effective has led researchers to…